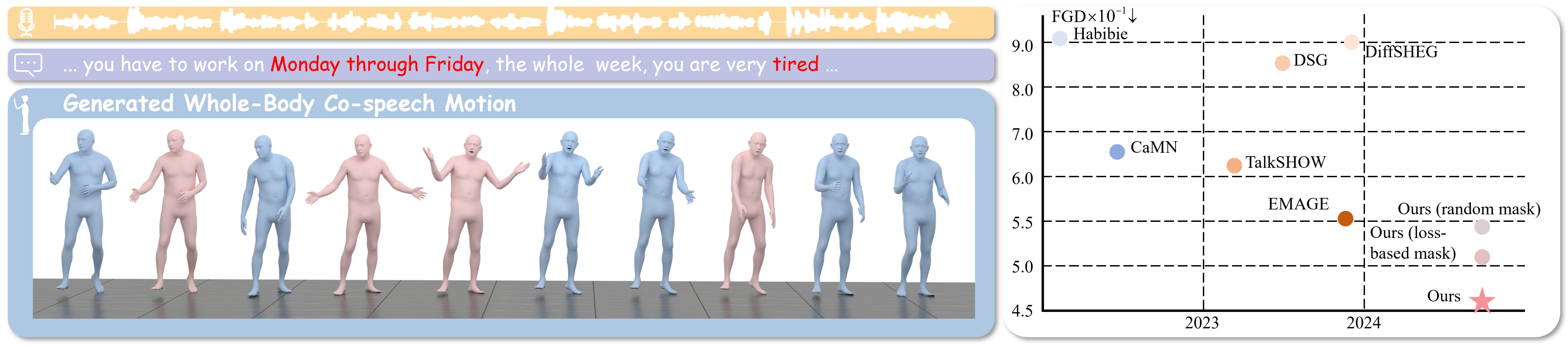

EchoMask: Speech-Queried Attention-based Mask Modeling for Holistic Co-Speech Motion Generation

📋 Abstract

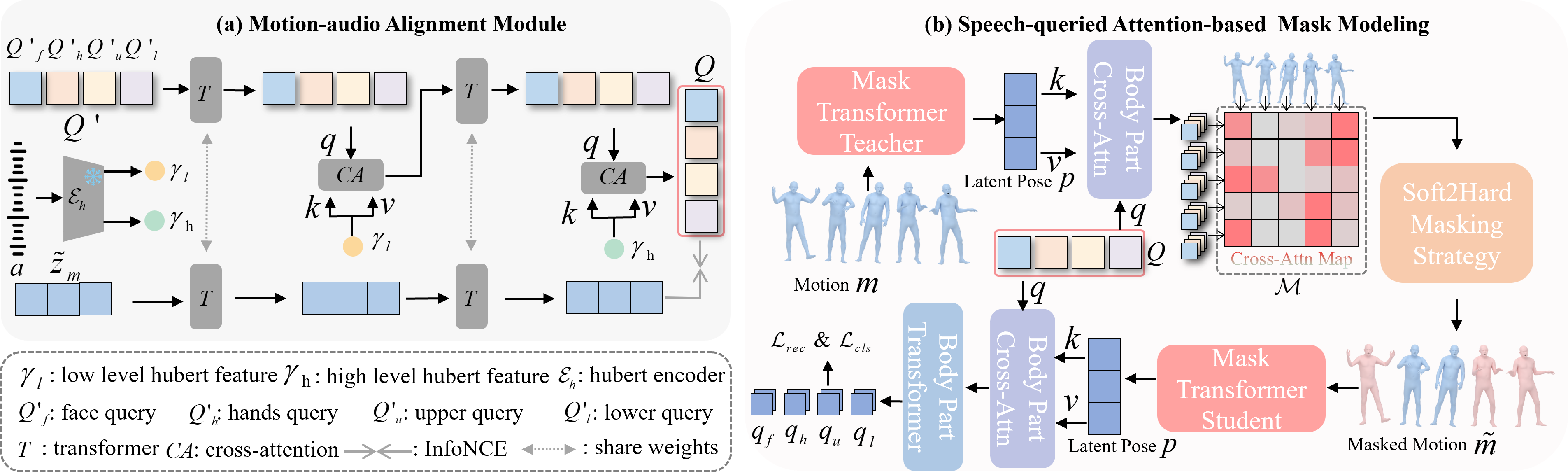

Masked modeling framework has shown promise in co-speech motion generation. However, it struggles to identify semantically significant frames for effective motion masking. In this work, we propose a speech-queried attention-based mask modeling framework for co-speech motion generation. Our key insight is to leverage motion-aligned speech features to guide the masked motion modeling process, selectively masking rhythm-related and semantically expressive motion frames. Specifically, we first propose a motion-audio alignment module (MAM) to construct a latent motion-audio joint space. In this space, both low-level and high-level speech features are projected, enabling motion-aligned speech representation using learnable speech queries. Then, a speech-queried attention mechanism (SQA) is introduced to compute frame-level attention scores through interactions between motion keys and speech queries, guiding selective masking toward motion frames with high attention scores. Finally, the motion-aligned speech features are also injected into the generation network to facilitate co-speech motion generation. Qualitative and quantitative evaluations confirm that our method outperforms existing state-of-the-art approaches, successfully producing high-quality co-speech motion. The code will be released at https://github.com/Xiangyue-Zhang/EchoMask.

🎬 Video

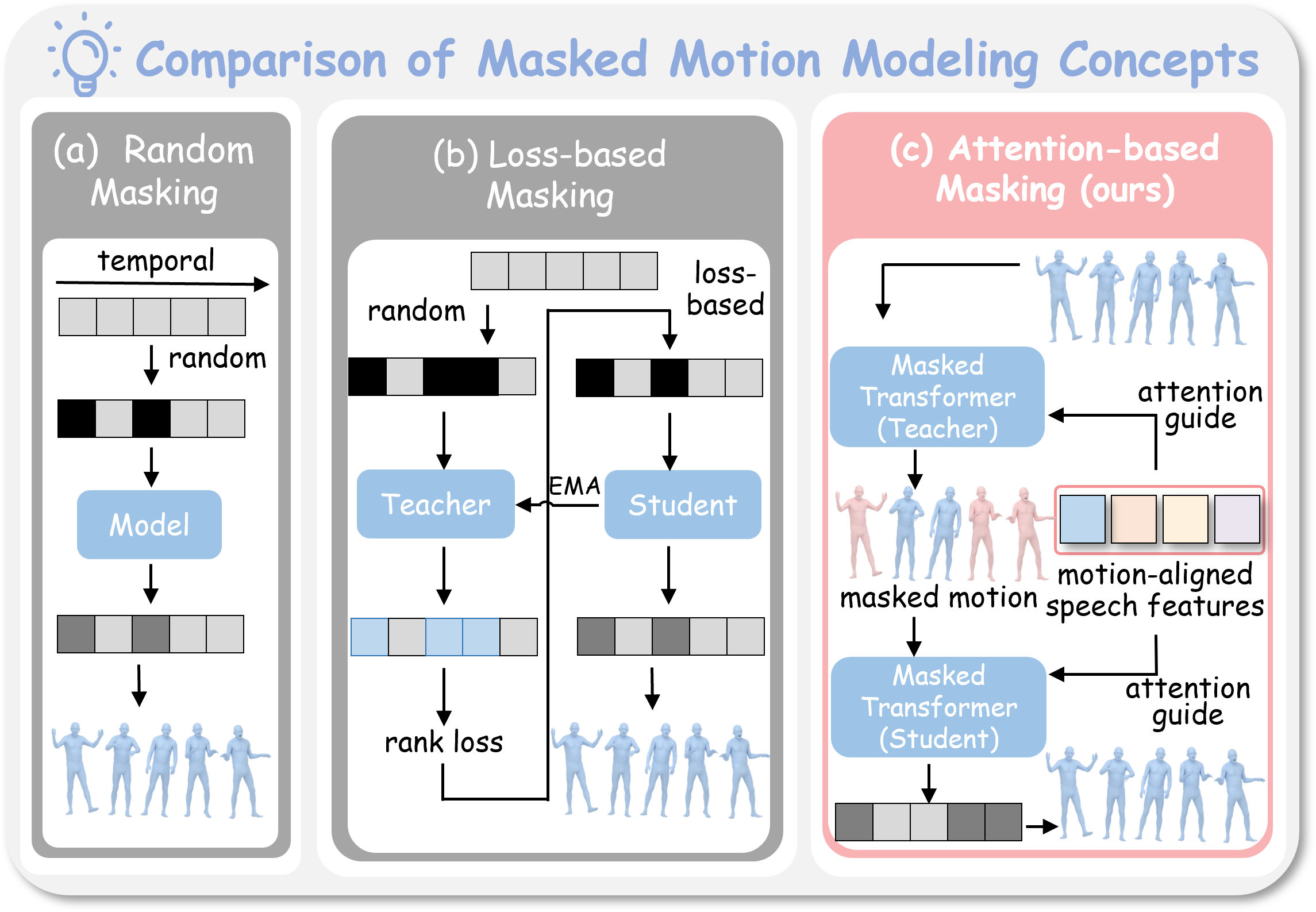

🔬 Comparison of Masked Motion Modeling Concepts

Can speech be used as a query to identify semantically important motion frames worth focusing on during masked modeling?

🏗️ Framework

Architecture of EchoMask: speech-queried attention-based mask modeling for co-speech motion generation.

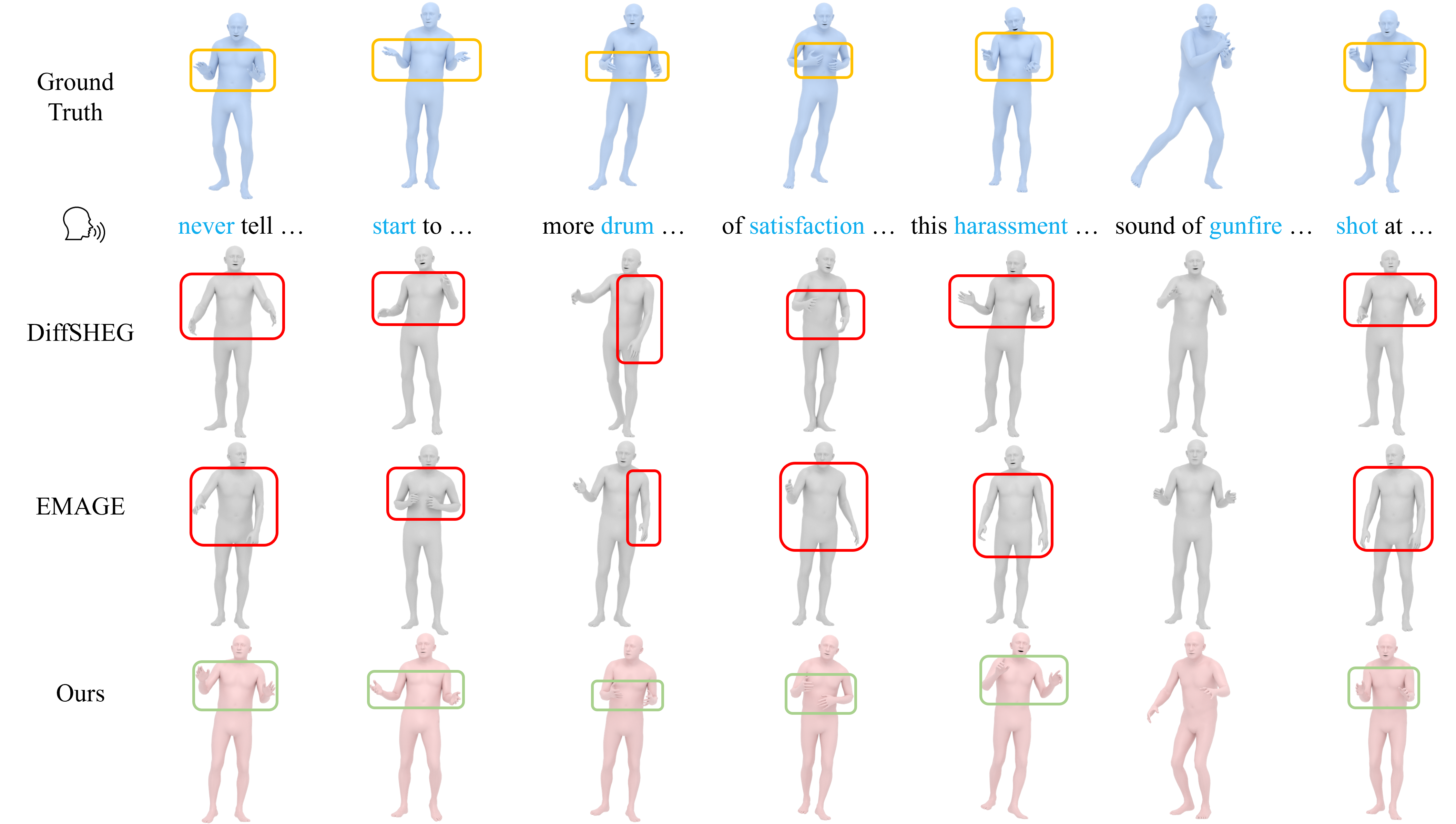

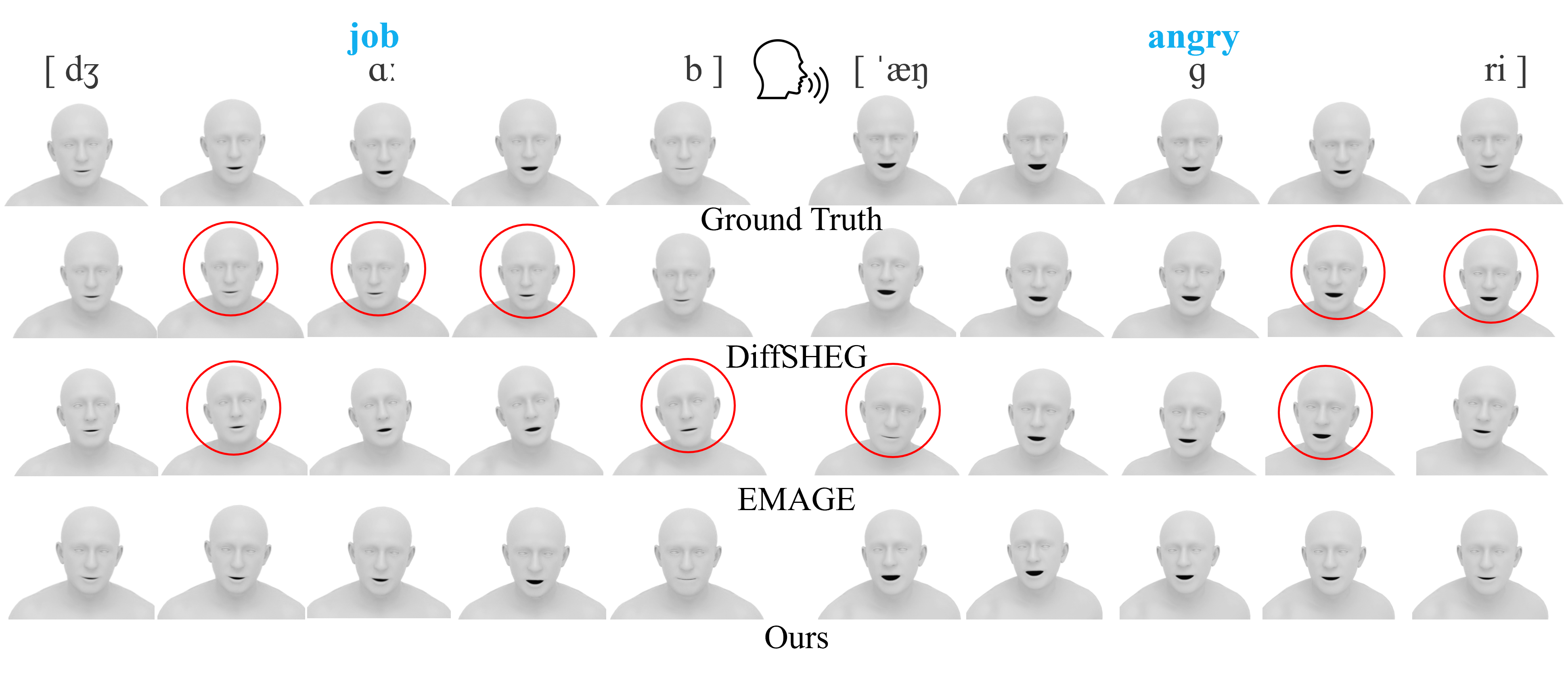

🎨 Qualitative Comparisons

Visual comparisons on body and facial motion generation.

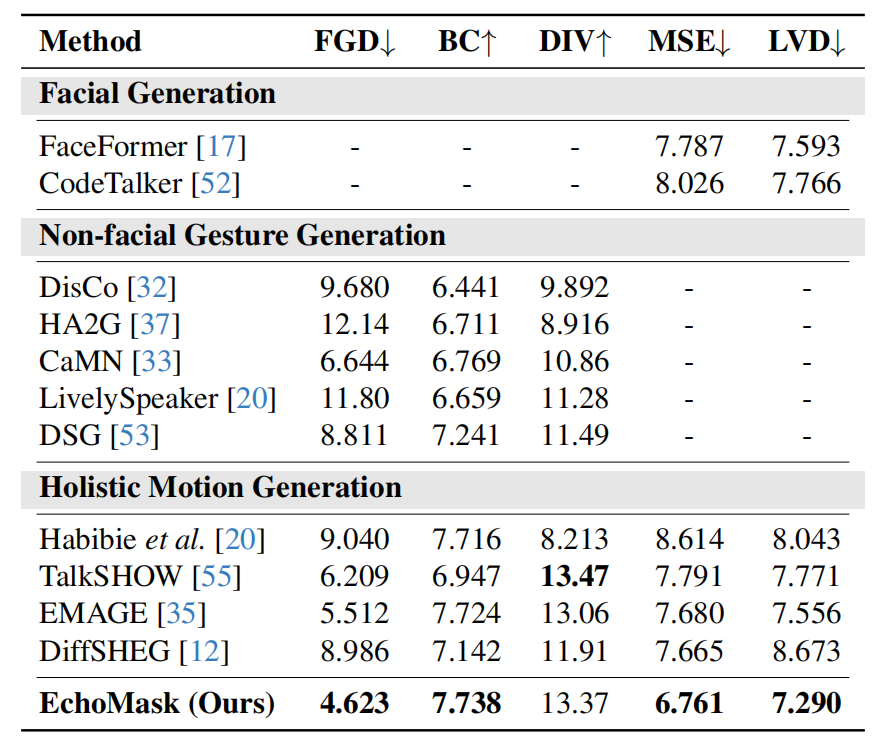

📊 Quantitative Comparisons

Comparison with state-of-the-art methods on standard benchmarks.

📄 BibTeX

@inproceedings{zhang2025echomask,

title={EchoMask: Speech-Queried Attention-based Mask Modeling for Holistic Co-Speech Motion Generation},

author={Zhang, Xiangyue and Li, Jianfang and Zhang, Jiaxu and Ren, Jianqiang and Bo, Liefeng and Tu, Zhigang},

booktitle={Proceedings of the 33rd ACM International Conference on Multimedia},

pages={10827--10836},

year={2025}

}