Mitigating Error Accumulation in Co-Speech Motion Generation via Global Rotation Diffusion and Multi-Level Constraints

📋 Abstract

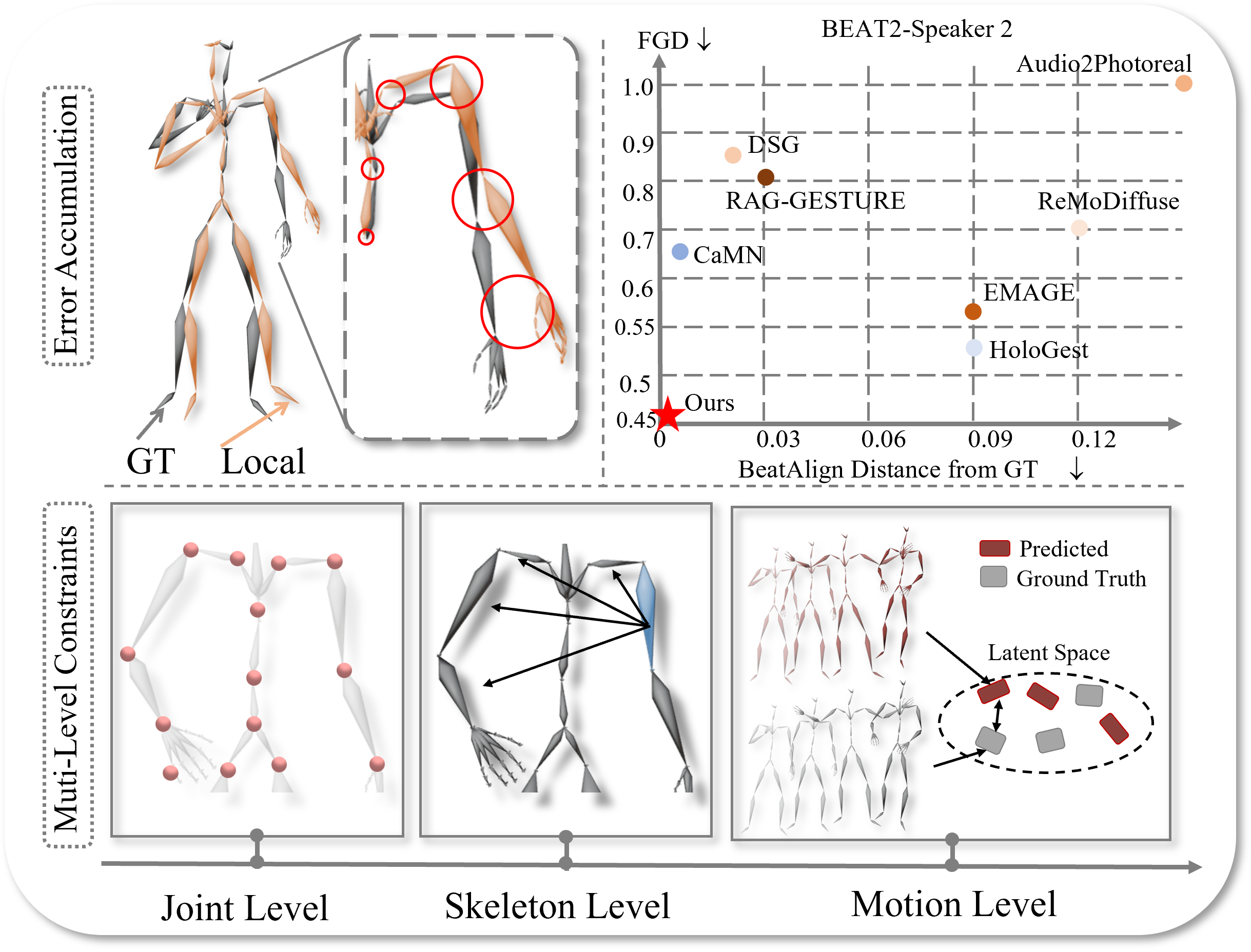

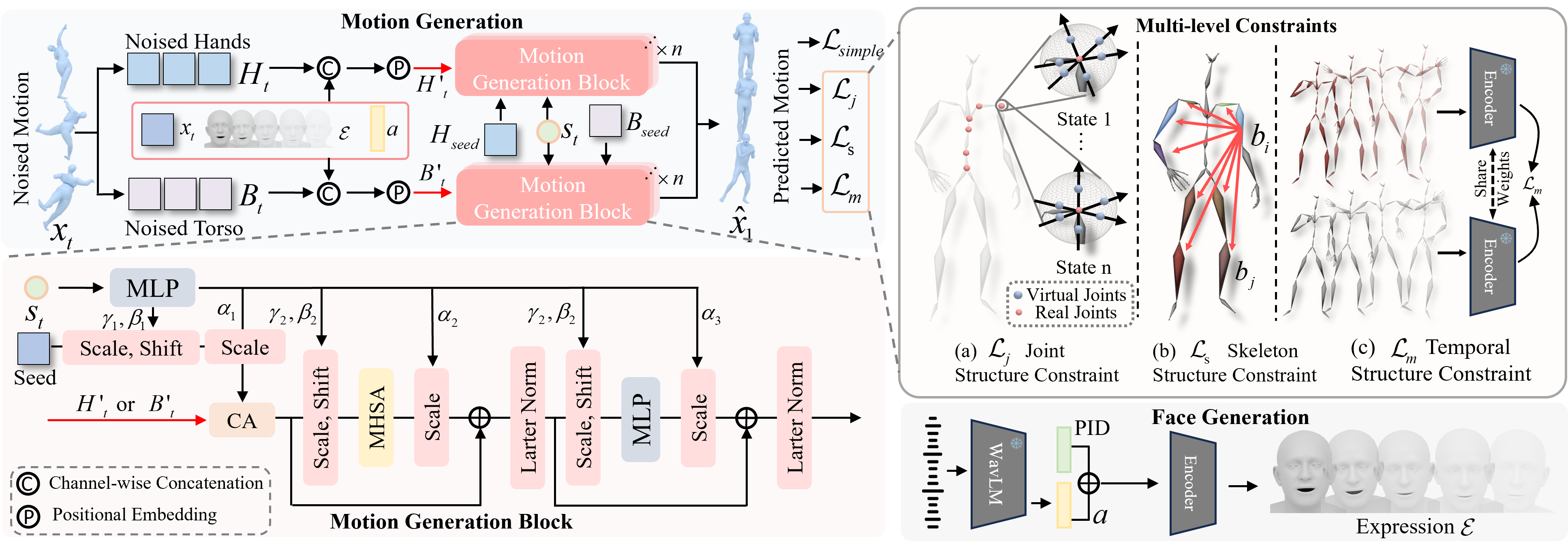

Reliable co-speech motion generation requires precise motion representation and consistent structural priors across all joints. Existing generative methods typically operate on local joint rotations, which are defined hierarchically based on the skeleton structure. This leads to cumulative errors during generation, manifesting as unstable and implausible motions at end-effectors. In this work, we propose GlobalDiff, a diffusion-based framework that operates directly in the space of global joint rotations for the first time, fundamentally decoupling each joint's prediction from upstream dependencies and alleviating hierarchical error accumulation. To compensate for the absence of structural priors in global rotation space, we introduce a multi-level constraint scheme. Specifically, a joint structure constraint introduces virtual anchor points around each joint to better capture fine-grained orientation. A skeleton structure constraint enforces angular consistency across bones to maintain structural integrity. A temporal structure constraint utilizes a multi-scale variational encoder to align the generated motion with ground-truth temporal patterns. These constraints jointly regularize the global diffusion process and reinforce structural awareness. Extensive evaluations on standard co-speech benchmarks show that GlobalDiff generates smooth and accurate motions, improving the performance by 46.0% compared to the current SOTA under multiple speaker identities. The code will be released at https://github.com/Xiangyue-Zhang/GlobalDiff.

🎬 Video

🏗️ Framework

GlobalDiff: global rotation diffusion augmented with multi-level structural constraints.

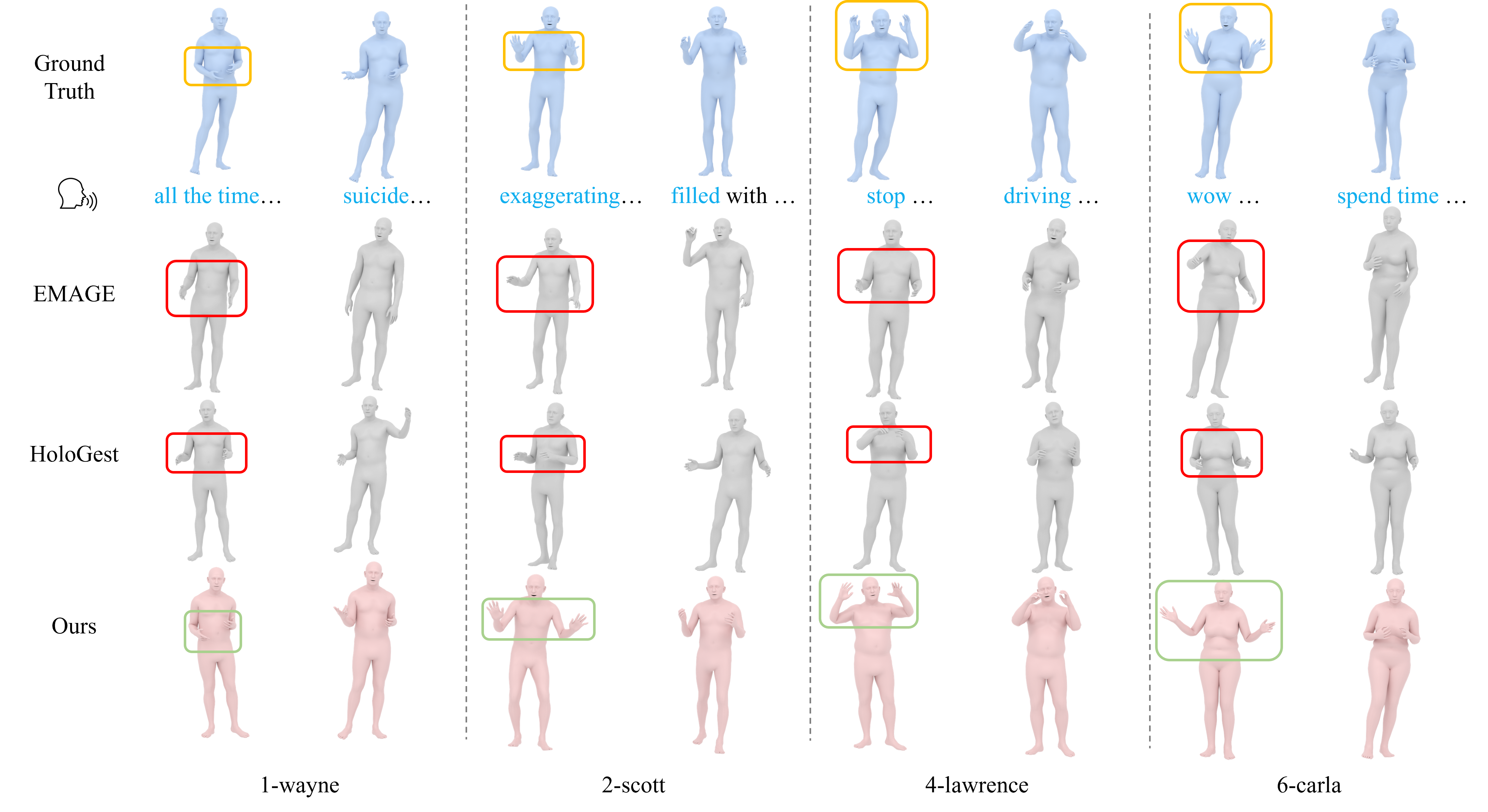

🎨 Qualitative Comparisons

GlobalDiff consistently generates semantically grounded and physically coherent co-speech motions across all speaker identities.

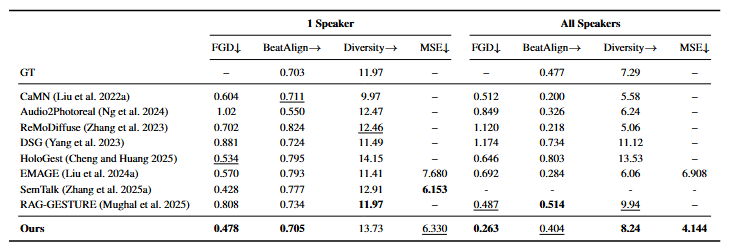

📊 Quantitative Comparisons

GlobalDiff consistently achieves the best performance across nearly all metrics on both single-speaker and multi-speaker settings.

📄 BibTeX

@misc{zhang2025mitigatingerroraccumulationcospeech,

title={Mitigating Error Accumulation in Co-Speech Motion Generation via Global Rotation Diffusion and Multi-Level Constraints},

author={Xiangyue Zhang and Jianfang Li and Jianqiang Ren and Jiaxu Zhang},

year={2025},

eprint={2511.10076},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2511.10076},

}