SemTalk: Holistic Co-speech Motion Generation with Frame-level Semantic Emphasis

📋 Abstract

A good co-speech motion generation cannot be achieved without a careful integration of common rhythmic motion and rare yet essential semantic motion. In this work, we propose SemTalk for holistic co-speech motion generation with frame-level semantic emphasis. Our key insight is to separately learn general motions and sparse motions, and then adaptively fuse them. In particular, rhythmic consistency learning is explored to establish rhythm-related base motion, ensuring a coherent foundation that synchronizes gestures with the speech rhythm. Subsequently, semantic emphasis learning is designed to generate semantic-aware sparse motion, focusing on frame-level semantic cues. Finally, to integrate sparse motion into the base motion and generate semantic-emphasized co-speech gestures, we further leverage a learned semantic score for adaptive synthesis. Qualitative and quantitative comparisons on two public datasets demonstrate that our method outperforms the state-of-the-art, delivering high-quality co-speech motion with enhanced semantic richness over a stable base motion. The code will be released at https://github.com/Xiangyue-Zhang/SemTalk.

🎬 Video

🏗️ Framework

SemTalk generates holistic co-speech motion in three stages: base motion, sparse motion, and adaptive fusion.

🎨 Qualitative Comparisons

Visual comparisons on body, face, and cross-dataset evaluation.

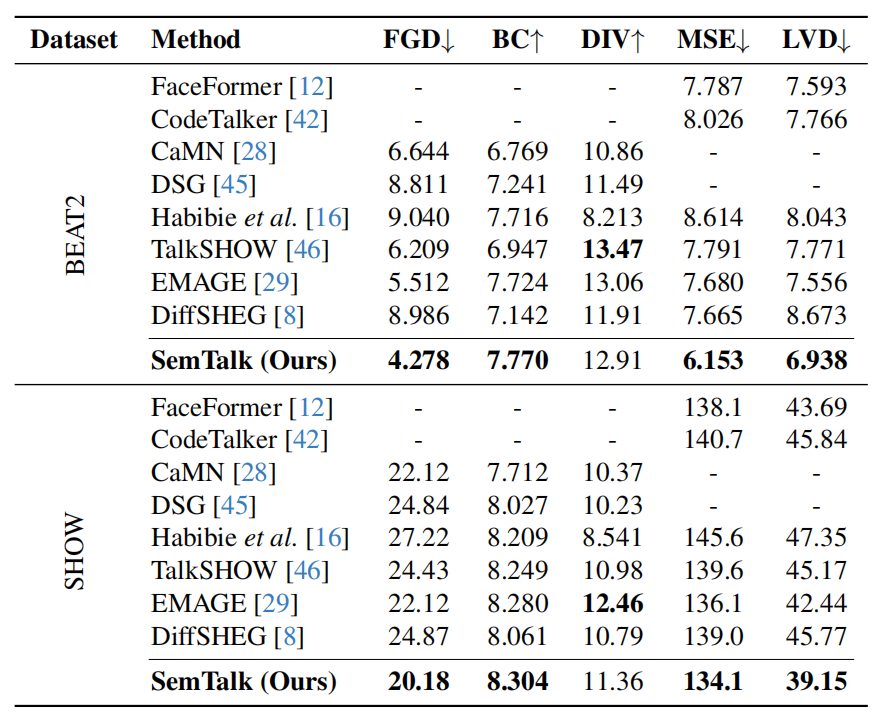

📊 Quantitative Comparisons

SemTalk consistently outperforms state-of-the-art baselines across both BEAT2 and SHOW datasets.

📄 BibTeX

@inproceedings{zhang2025semtalk,

title={SemTalk: Holistic Co-speech Motion Generation with Frame-level Semantic Emphasis},

author={Zhang, Xiangyue and Li, Jianfang and Zhang, Jiaxu and Dang, Ziqiang and Ren, Jianqiang and Bo, Liefeng and Tu, Zhigang},

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision},

pages={13761--13771},

year={2025}

}