News

Featured Open-Source System

-

Technical ReportDeep Researcher Agent: An Autonomous Framework for 24/7 Deep Learning Experimentation with Zero-Cost MonitoringarXiv preprint arXiv:2604.05854, 2026

Technical ReportDeep Researcher Agent: An Autonomous Framework for 24/7 Deep Learning Experimentation with Zero-Cost MonitoringarXiv preprint arXiv:2604.05854, 2026

Selected Publications

-

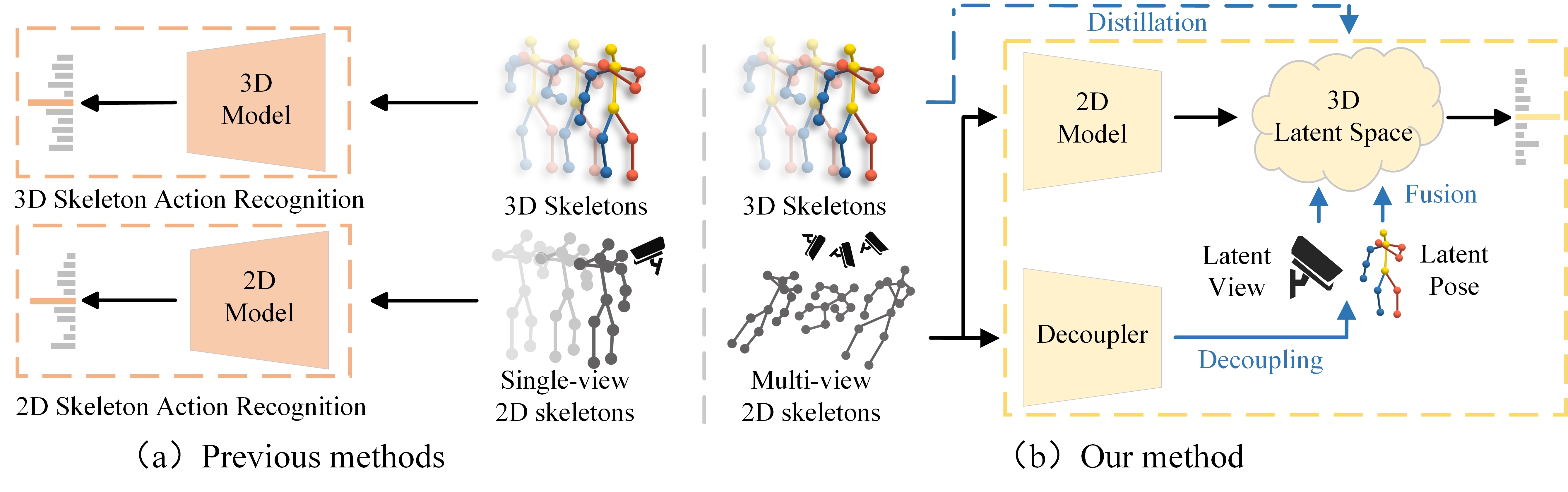

T-CSVT 2025Robust 2D Skeleton Action Recognition via Decoupling and Distilling 3D Latent FeaturesIEEE Transactions on Circuits and Systems for Video Technology (T-CSVT), 2025

T-CSVT 2025Robust 2D Skeleton Action Recognition via Decoupling and Distilling 3D Latent FeaturesIEEE Transactions on Circuits and Systems for Video Technology (T-CSVT), 2025

Experience & Education

ByteDance

Research Intern, Intelligent Creation Team

Advisor: Youjiang Xu. Working on large streaming motion generation models.

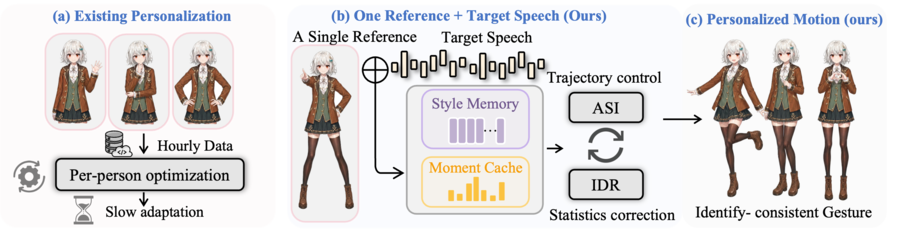

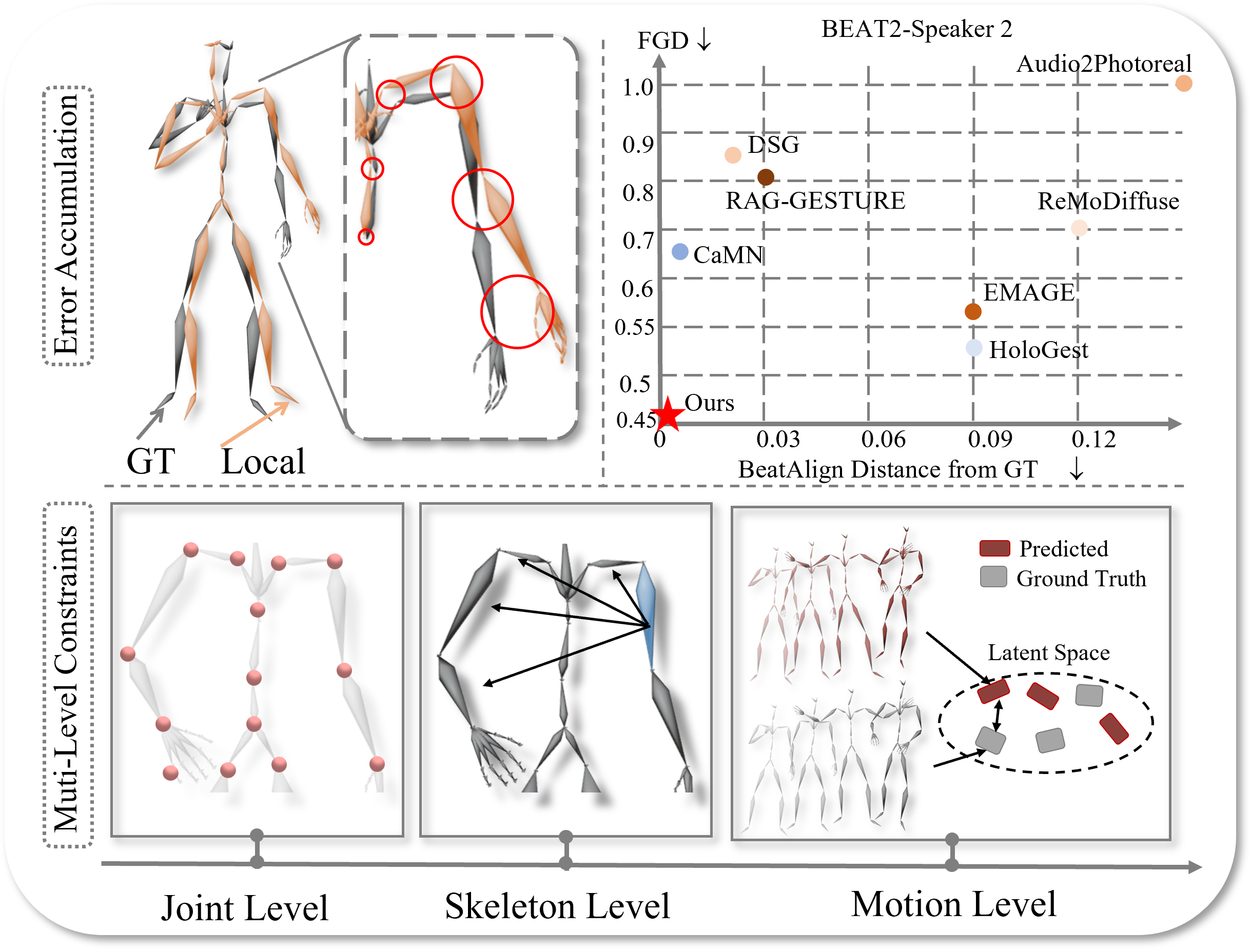

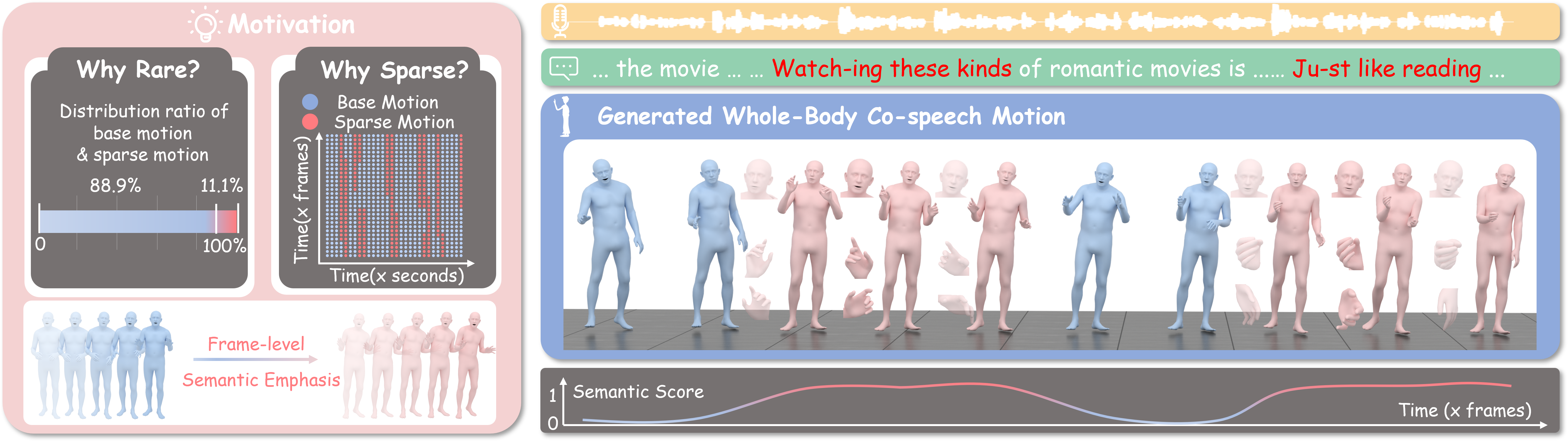

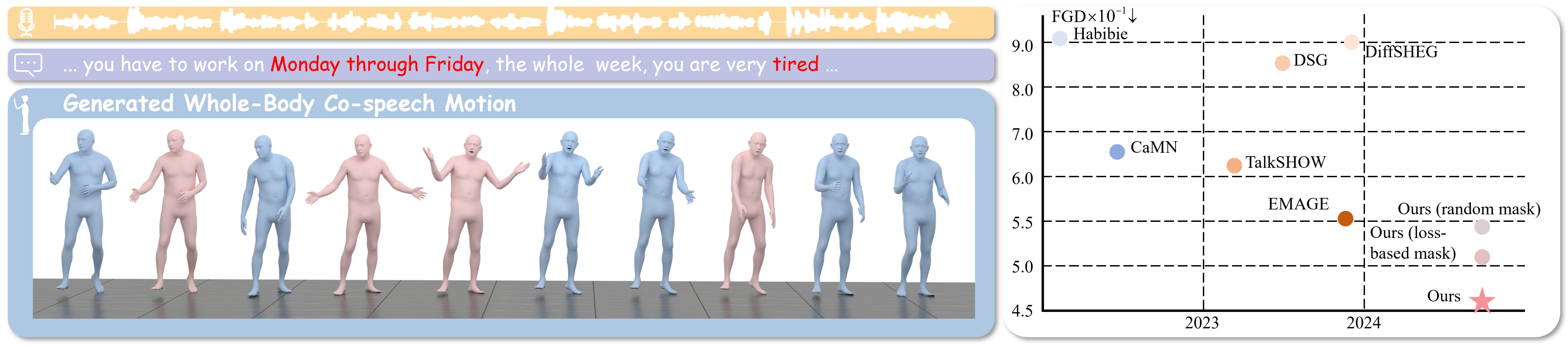

Alibaba

Research Intern, Tongyi Lab

Advisors: Dr. Liefeng Bo and Dr. Jianfang Li. Working on co-speech motion generation.